TL;DR: Modern conflict targets human cognition: adversaries exploit social platforms, deepfakes, and identity to fracture trust and stall national response.

Battlespace of the Mind: The Invisible Tactics of Cognitive Warfare

I have spent years studying persuasion and political behavior. Pair that with social media, foreign influence operations, and advances in AI, and the reading gets dark fast.

Watch your children scroll on their phone, and it gets terrifying faster.

You check your phone; you see a video of a politician saying something unforgivable. You see a post about a neighbor being a traitor; you feel the surge of anger. This is not just social media friction: it is a calculated strike. Adversaries no longer need to destroy infrastructure to win. They can degrade perception. If they can distort how a population interprets events, they can blunt resistance before it forms. This is the reality of the twenty first century battlefield.

The paper, New Perspectives on Cognitive Warfare, outlines a shift in global conflict. Authors Laurie Fenstermacher, David Uzhca, Katie Larson, Christine Vitiello, and Steve Shellman presented this work at the 2023 SPIE Defense and Commercial Sensing conference.

They argue that cognitive warfare is a distinct domain: it sits alongside land, maritime, air, space, and cyber. The objective is not to change a single fact: the objective is to change how you process facts.

Citation & Links

Title: New Perspectives on Cognitive Warfare

Link: [CognitiveWarfare2023SPIEpaper_FINAL.pdf]

Peer Review Status: Peer Reviewed (Presented at SPIE Defense + Commercial Sensing conference)

Citation: Fenstermacher, Laurie, David Uzhca, Katie Larson, Christine Vitiello, and Steve Shellman. 2023. “New Perspectives on Cognitive Warfare.” Proceedings of SPIE 12538.

Methodology

The authors analyze Russian and Chinese military doctrine, review case studies such as election interference in the Baltic states, the 2014 annexation of Crimea, and influence operations in Taiwan, and propose a “House Model” that integrates neuroscience, behavioral science, psychology, AI, and information systems.

They define cognitive warfare as coordinated action designed to influence, disrupt, corrupt, or usurp human cognition at scale.

The shift is subtle but profound. Information warfare targets content. Cognitive warfare targets processing.

Results and Findings

The study defines cognitive warfare as a new domain of battle, joining land, sea, air, space, and cyber. It moves beyond simple “information warfare” by targeting how people think rather than just the information they see.

Key findings include:

- The Target is Universal: Attacks aim at leaders, soldiers, and entire populations. Anyone with a smartphone is in the battlespace.

- Trust is the Vulnerability: Adversaries look for cognitive centers of gravity: institutions, processes, and values that a society trusts. Once trust erodes, coordination collapses.

- Breaking the Will: Success in battle depends on breaking the enemy’s will to fight and sustaining one’s own. Cognitive warfare demotivates or discourages a population from combating an enemy.

- Reality Manipulation: In 2014, Russia used unmarked military units and leader messaging to mislead international public opinion. They altered the sense of reality: making people believe a takeover was just an exercise.

- Technological Acceleration: Artificial intelligence, deepfakes, and virtual reality allow manipulations to evolve faster than policy makers can respond.

Deep Dive: The House Model

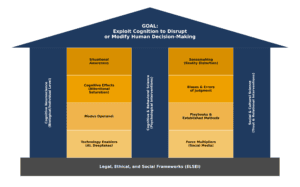

The House Model of Cognitive Warfare, as detailed in the 2023 SPIE paper, is a structural framework designed to categorize the diverse fields of knowledge and operational layers required to conduct or defend against attacks on human cognition. It organizes complex scientific disciplines and military tactics into a single, cohesive hierarchy to help researchers and strategists identify where more study is needed.

The model is structured like a building, with each level representing a different aspect of achieving cognitive warfare goals.

The Foundation: Legal and Ethical Frameworks

The base of the house rests on the ELSEI (Ethical, Legal, and Social Implications) framework. This includes the legal and defense doctrines, such as NATO’s Security and Defence guidelines, which dictate the boundaries of how cognitive warfare can be ethically and legally managed.

The Pillars: The Science of Influence

Three core scientific “pillars” support the house, providing the academic and biological knowledge necessary for cognitive intervention:

conduct or defend against attacks on human cognition. It organizes complex scientific disciplines and military tactics into a single, cohesive hierarchy to help researchers and strategists identify where more study is needed.

The model is structured like a building, with each level representing a different aspect of achieving cognitive warfare goals.

The Foundation: Legal and Ethical Frameworks

The base of the house rests on the ELSEI (Ethical, Legal, and Social Implications) framework. This includes the legal and defense doctrines, such as NATO’s Security and Defence guidelines, which dictate the boundaries of how cognitive warfare can be ethically and legally managed.

The Pillars: The Science of Influence

Three core scientific “pillars” support the house, providing the academic and biological knowledge necessary for cognitive intervention:

- Cognitive Neuroscience: Focuses on biological processes and neural connections in the brain to understand how they control mental activities. It explores how neurotechnology can be used for both kinetic (physical injury) and non-kinetic (polarization) effects.

- Cognitive and Behavioral Science: Concentrates on psychological interventions. This field studies how to exploit mental biases, heuristics, and reflexive thinking to influence a target’s decision-making process.

- Social and Cultural Science: Addresses trust and relational interventions at a societal level. It is used to identify a population’s “Cognitive Centers of Gravity,” such as national values or shared trust in institutions, which can then be targeted.

- Technology Enablers and Force Multipliers: Includes the use of AI, social media, deepfakes, and virtual reality to scale manipulations and evolve faster than human response times.

- Modus Operandi: The established methods or “playbooks” used to conduct warfare, such as “distract and dismiss” or the use of fake events.

- Cognitive Effects: The specific psychological impacts desired, such as attentional saturation, errors of judgment, and the triggering of cognitive biases.

- Situational Awareness / Sensemaking: The top operational floor focuses on how a target perceives their environment. Attackers aim to corrupt the accuracy of an adversary’s perception to create a “fog” of war.

“The brain is the battlefield of the future.” — Dr. James Giordano

Why It Matters

National security depends on cognitive resilience. If citizens lose trust in elections, the judicial system, or their neighbors, the stability of the nation dissolves.

You do not need to fire a shot if the population cannot agree on what is real.

Cognitive warfare impacts how national values are defined and defended. It creates a “fog” of war: making things difficult in complex environments.

Practical Implications for Policy Makers

- Invest in “prebuttal” strategies: release classified info early to debunk enemy lies before they spread

- Create legal frameworks for AI and deepfakes to protect citizens from digital deception.

- Fund research into neuroscience and how digital tools affect human decision making.

Practical Implications for Public Affairs Officials

- Utilize “prebuttal” strategies: release classified info early to debunk enemy lies before they spread

- Focus on the impact of messages (like polarization) rather than just counting “fake news” stories.

- Diversify monitoring: stop looking only at Facebook and start watching emergin platformsTelegram, TikTok, and 4chan.

- Use visual and audio content to fight back: text alone is not enough to counter multimodal attacks.

Critiques and Areas for Future Study

The authors acknowledge that much research relies on WEIRD populations, Western, educated, industrialized, rich, democratic. That narrows inference.

Future work should:

- Analyze non Western platforms such as WeChat, Weibo, and Douyin.

- Develop detection tools for real time deepfake manipulation.

- Build models that parse memes, video, audio, and hybrid signals, not just text.

Final Thoughts

The paper frames cognitive warfare as a foreign threat: yet we must look closer. Foreign actors did not invent the systems that divide us. They found those systems already active. Digital platforms reward emotional intensity. Domestic actors use the same tools to win elections. The infrastructure of fracture runs itself.

The line between politics and warfare is thin: it sits at intent. Are we competing for power within a system, or are we breaking the system? Cognitive warfare weaponizes our own thoughts and biases against us.

Defending the mind starts with understanding the house built to house it.